Constraint Handling for Safe RL

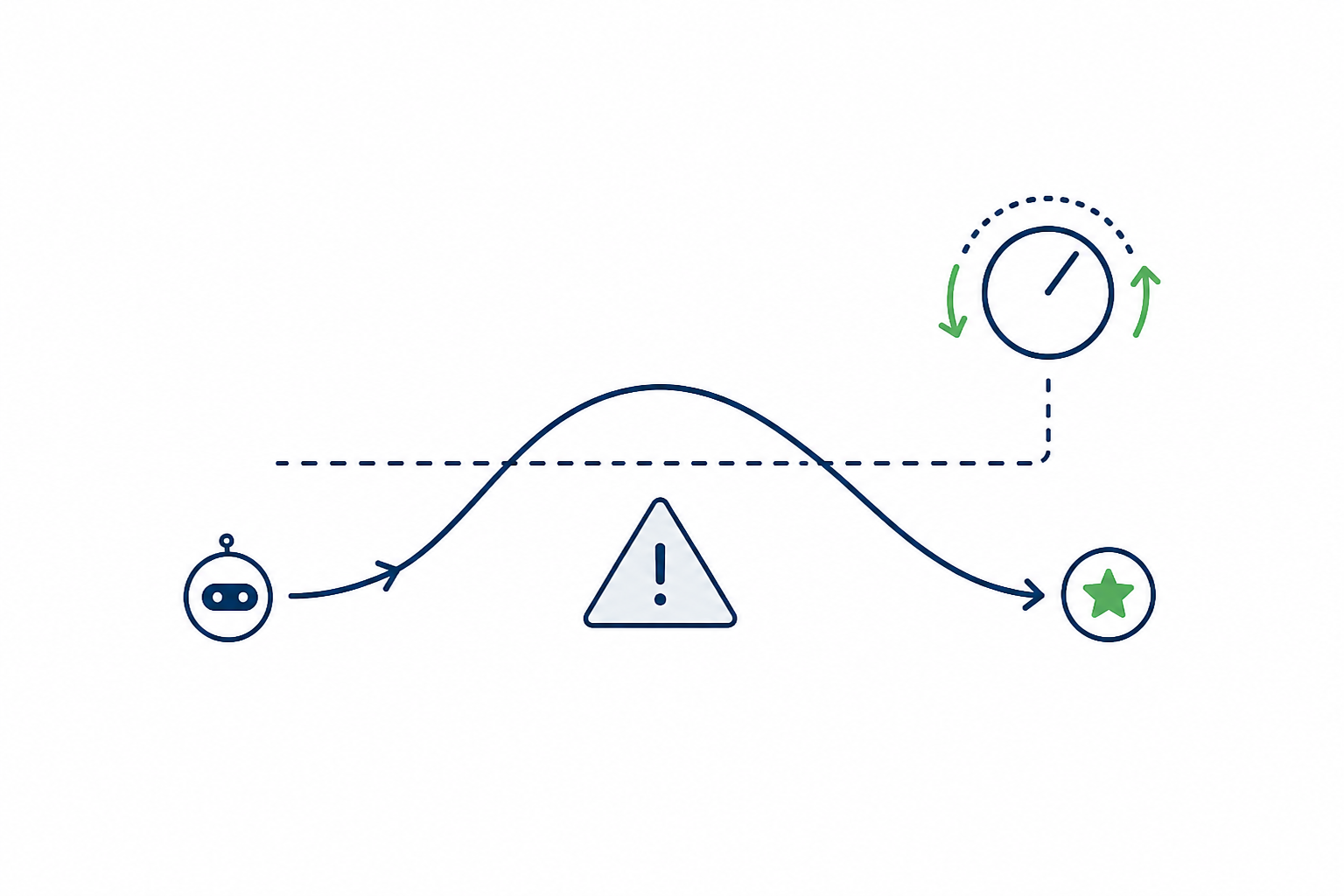

Changes Lagrangian or controller-style multiplier updates and cost-reward advantage mixing to improve reward while keeping episode cost below target.

Description

Safe RL: Constraint-Handling Mechanism Design

Research Question

Design a constraint-handling mechanism for safe reinforcement learning.

Your code goes in custom_lag.py, a subclass of PPO registered as

CustomLag. Reference implementations using a Lagrange multiplier

(PPOLag) and a PID controller (CPPOPID) are provided as read-only

*.edit.py baselines.

Background

Safe RL aims to maximize reward while keeping a long-run cost (e.g.

the count of safety violations) below a fixed limit. The standard

approach formulates the problem as a constrained MDP and converts it to

an unconstrained dual problem via a multiplier lambda updated from

the running cost violation. The mechanism that updates this multiplier

and combines reward and cost advantages directly determines the

agent's safety behavior:

- naive — constraint-unaware PPO baseline that ignores the safety constraint entirely; provides an upper bound on reward with no cost control.

- PPOLag — the multiplier is treated as a learnable parameter optimized by Adam to satisfy the dual objective. Simple but slow to react and prone to oscillation.

- CPPOPID — Stooke, Achiam and Abbeel, "Responsive Safety in

Reinforcement Learning by PID Lagrangian Methods"

(arXiv:2007.03964, ICML 2020). Replaces the integral-only Lagrange

update with a PID controller; the benchmark uses the paper-style

CPPOPID configuration with gains

kp = 0.1,ki = 0.01,kd = 0.01and a derivative delay window of 10 epochs (matchingomnisafe/common/pid_lagrange.py).

You must design:

- A multiplier update rule in

_update(). - An advantage combination formula in

_compute_adv_surrogate()that blends the reward advantageadv_rand cost advantageadv_cusing the current multiplier (e.g.(adv_r - lam * adv_c) / (1 + lam)in the standard Lagrangian baseline).

The PPO rollout loop, value functions, optimizer, environment interface, and registration plumbing are fixed.

Evaluation

Evaluated on Safety-Gymnasium navigation environments including:

- SafetyPointGoal1-v0 — point robot navigating to goals while avoiding hazards.

- SafetyCarGoal1-v0 — non-holonomic car robot with the same goal structure.

- SafetyPointButton1-v0 — point robot pressing goal buttons while avoiding hazards.

Each environment trains for the benchmark's fixed step budget. Metrics:

- Episode return (

reward) — higher is better. - Episode cost (

cost) — lower is better, with a target threshold of 25.0 per the Safety-Gymnasium convention used inomnisafe.

A method should achieve high return only when the cost constraint is controlled across all environments.

Code

1"""Custom Lagrangian-based safe PPO for MLS-Bench.23EDITABLE section: imports + constraint handling methods.4FIXED sections: algorithm registration, learn() with metrics reporting.5"""67from __future__ import annotations89import time1011import numpy as np12import torch1314from omnisafe.algorithms import registry15from omnisafe.algorithms.on_policy.base.ppo import PPO

1# Copyright 2023 OmniSafe Team. All Rights Reserved.2#3# Licensed under the Apache License, Version 2.0 (the "License");4# you may not use this file except in compliance with the License.5# You may obtain a copy of the License at6#7# http://www.apache.org/licenses/LICENSE-2.08#9# Unless required by applicable law or agreed to in writing, software10# distributed under the License is distributed on an "AS IS" BASIS,11# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.12# See the License for the specific language governing permissions and13# limitations under the License.14# ==============================================================================15"""Implementation of Lagrange."""

1# Copyright 2023 OmniSafe Team. All Rights Reserved.2#3# Licensed under the Apache License, Version 2.0 (the "License");4# you may not use this file except in compliance with the License.5# You may obtain a copy of the License at6#7# http://www.apache.org/licenses/LICENSE-2.08#9# Unless required by applicable law or agreed to in writing, software10# distributed under the License is distributed on an "AS IS" BASIS,11# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.12# See the License for the specific language governing permissions and13# limitations under the License.14# ==============================================================================15"""Implementation of PID Lagrange."""

1# Copyright 2023 OmniSafe Team. All Rights Reserved.2#3# Licensed under the Apache License, Version 2.0 (the "License");4# you may not use this file except in compliance with the License.5# You may obtain a copy of the License at6#7# http://www.apache.org/licenses/LICENSE-2.08#9# Unless required by applicable law or agreed to in writing, software10# distributed under the License is distributed on an "AS IS" BASIS,11# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.12# See the License for the specific language governing permissions and13# limitations under the License.14# ==============================================================================15"""Implementation of the PPO algorithm."""

Method Summary

Adaptive PID-Lag with asymmetric advantage

PID Lagrangian targeting 80% of the cost limit, with violation-quadratic gain scheduling, anti-windup integral, predictive lookahead, and 1.5x cost penalty on cost-increasing actions.

Per epoch:1. ;\;2. gain scale ;\;3. EMA smoothed4. integral: if add else add ;\;clip5. EMA cost ;\;6. predictive trend7.8.