Evolutionary Operators for Continuous Black-Box Optimization

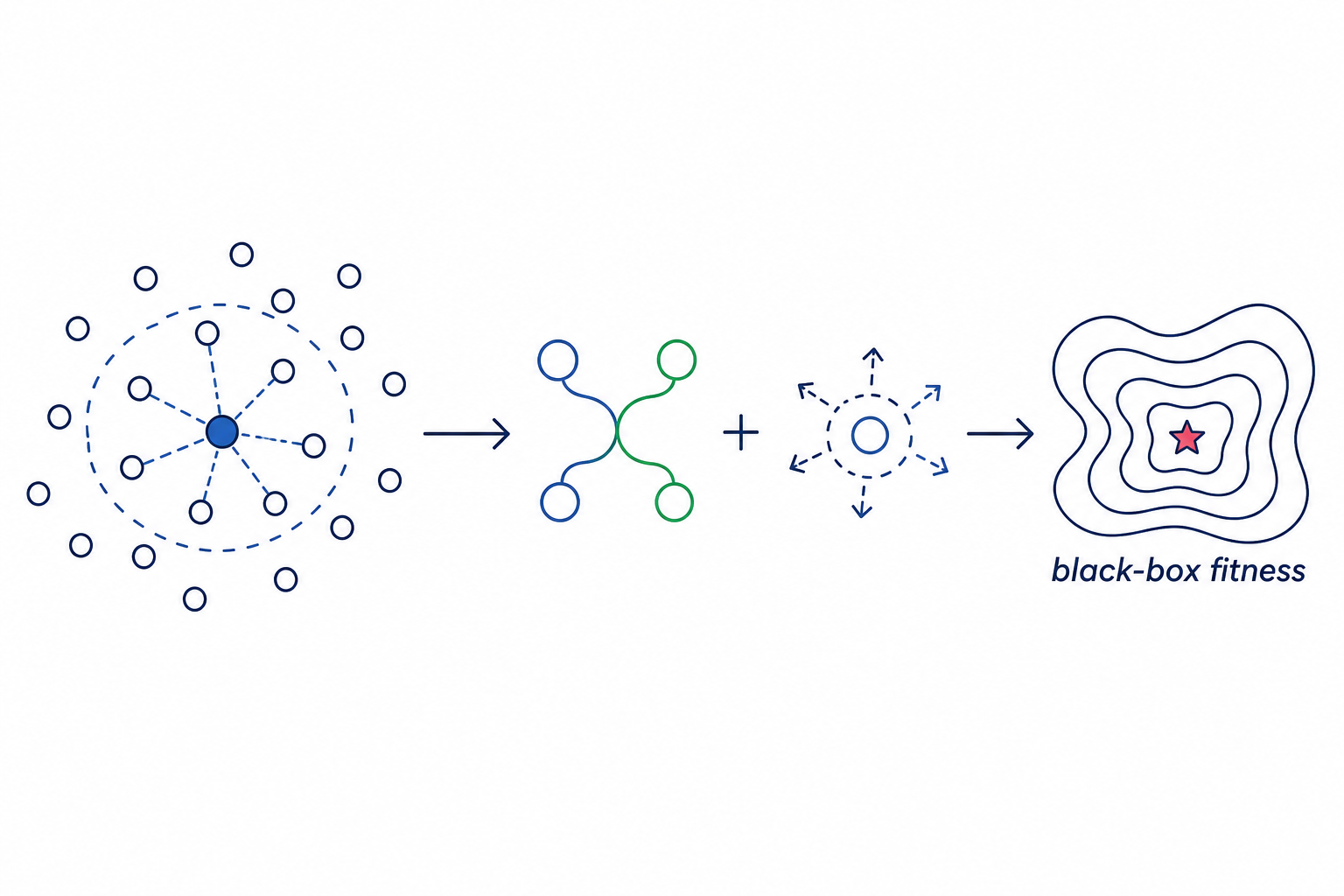

Selection, crossover, mutation, or the evolutionary loop are redesigned to lower final best fitness and improve convergence on continuous benchmark functions.

Description

Evolutionary Optimization Strategy Design

Research Question

Design a novel combination of selection, crossover, and mutation operators (and/or a novel evolutionary loop) for continuous black-box optimization that outperforms standard approaches across multiple benchmark functions.

Background

Evolutionary algorithms (EAs) are population-based metaheuristics for black-box optimization. The three core operators — selection, crossover, and mutation — together with the overall evolutionary loop design, determine an EA's performance. Standard approaches include:

- Genetic Algorithms (GA) with Tournament selection + Simulated Binary Crossover (SBX) + Polynomial Mutation (Deb and Agrawal, 1995).

- CMA-ES — Covariance Matrix Adaptation Evolution Strategy (Hansen and Ostermeier, "Completely Derandomized Self-Adaptation in Evolution Strategies", Evolutionary Computation 9(2), 2001).

- Differential Evolution (DE) — uses vector differences between population members for mutation (Storn and Price, "Differential Evolution", J. Global Optim. 11, 1997).

- L-SHADE — Success-History based Adaptive DE with Linear population reduction (Tanabe and Fukunaga, "Improving the Search Performance of SHADE Using Linear Population Size Reduction", IEEE CEC 2014; CEC 2014 winner).

Each has strengths on different function landscapes (multimodal, ill-conditioned, high-dimensional), but no single strategy dominates all.

Task

Modify the editable section of custom_evolution.py to implement a novel or improved evolutionary strategy. You may modify:

custom_select(population, k, toolbox)— selection operator.custom_crossover(ind1, ind2)— crossover/recombination operator.custom_mutate(individual, lo, hi)— mutation operator.run_evolution(...)— the full evolutionary loop (you can restructure the algorithm entirely).

The DEAP library (deap.base, deap.creator, deap.tools) is available. You may also use numpy, scipy, math, and random.

Interface

- Individuals: lists of floats, each with a

.fitness.valuesattribute (tuple of one float for minimization). run_evolutionmust return(best_individual, fitness_history)wherefitness_historyis a list of best fitness per generation.- TRAIN_METRICS: print

TRAIN_METRICS gen=G best_fitness=F avg_fitness=Aperiodically (every 50 generations). - Respect the function signature and return types — the evaluation harness below the editable section is fixed.

Evaluation

Strategies are evaluated on benchmarks (all minimization, lower is better):

| Benchmark | Function | Dimensions | Domain | Global Minimum |

|---|---|---|---|---|

| rastrigin-30d | Rastrigin | 30 | [-5.12, 5.12] | 0 |

| rosenbrock-30d | Rosenbrock | 30 | [-5, 10] | 0 |

| ackley-30d | Ackley | 30 | [-32.768, 32.768] | 0 |

| rastrigin-100d | Rastrigin | 100 | [-5.12, 5.12] | 0 |

Metrics: best_fitness (final best value, lower is better) and convergence_gen (generation reaching near-final fitness).

Baselines (paper-cited reference implementations)

- ga_sbx — Genetic Algorithm with Simulated Binary Crossover and Polynomial Mutation (Deb and Agrawal, 1995); paper-default

eta_c = eta_m = 20, mutation probability1/n. - de — Classical DE/rand/1/bin (Storn and Price, 1997); paper-default

F = 0.5,CR = 0.9. - lshade — L-SHADE (Tanabe and Fukunaga, IEEE CEC 2014); paper-default initial population

18 * n, archive size2.6 * pop, history memoryH = 6, linear population reduction toN_min = 4.

Code

1#!/usr/bin/env python32"""Evolutionary Optimization Strategy Benchmark.34This script benchmarks an evolutionary optimization strategy on standard5continuous optimization test functions (Rastrigin, Rosenbrock, Ackley).6The goal is to minimize each function by designing effective selection,7crossover, and mutation operators.89Usage:10python deap/custom_evolution.py --function rastrigin --dim 30 --seed 4211"""1213import argparse14import math15import random

Method Summary

ERC-LSHADE (Eigenvector-Rotated)

L-SHADE current-to-pbest with bounce-back boundaries plus a condition-number-driven probability of doing the binomial crossover in eigenvector space.

1. init L-SHADE: H=6 history, M_F[]=0.5, M_CR[]=0.8, archive A, N_init=pop_size, N_min=42. every 25 gens: PCA on top-N/3 of pop -> eigenbasis B,3. for each i: sample , ; current-to-pbest mutation4. with prob : ; else standard binomial5. bounce-back: if outside6. greedy select; on improvement push parent to archive7. weighted Lehmer mean update for , weighted arith for8. linear pop reduction ; on stagnation(40 gens) replace worst 1/5 with random